Everyone has an AI roadmap. Hardly anyone has an AI strategy.

by Yannis Larios

Somewhere in Europe right now, a CEO is presenting her AI programme to the board. Three initiatives. Colour-coded by department. A Gantt chart stretching to Q4 2026. The slide looks serious. The ambition is genuine. The applause is polite.

Eighteen months from now, several of those initiatives will quietly disappear. Some will still be 'in pilot'. One will have consumed most of the budget. The rest will survive mainly as PowerPoint archaeology.

This is usually called an execution problem. The underlying problem is design.

The wrong mental model is costing you years

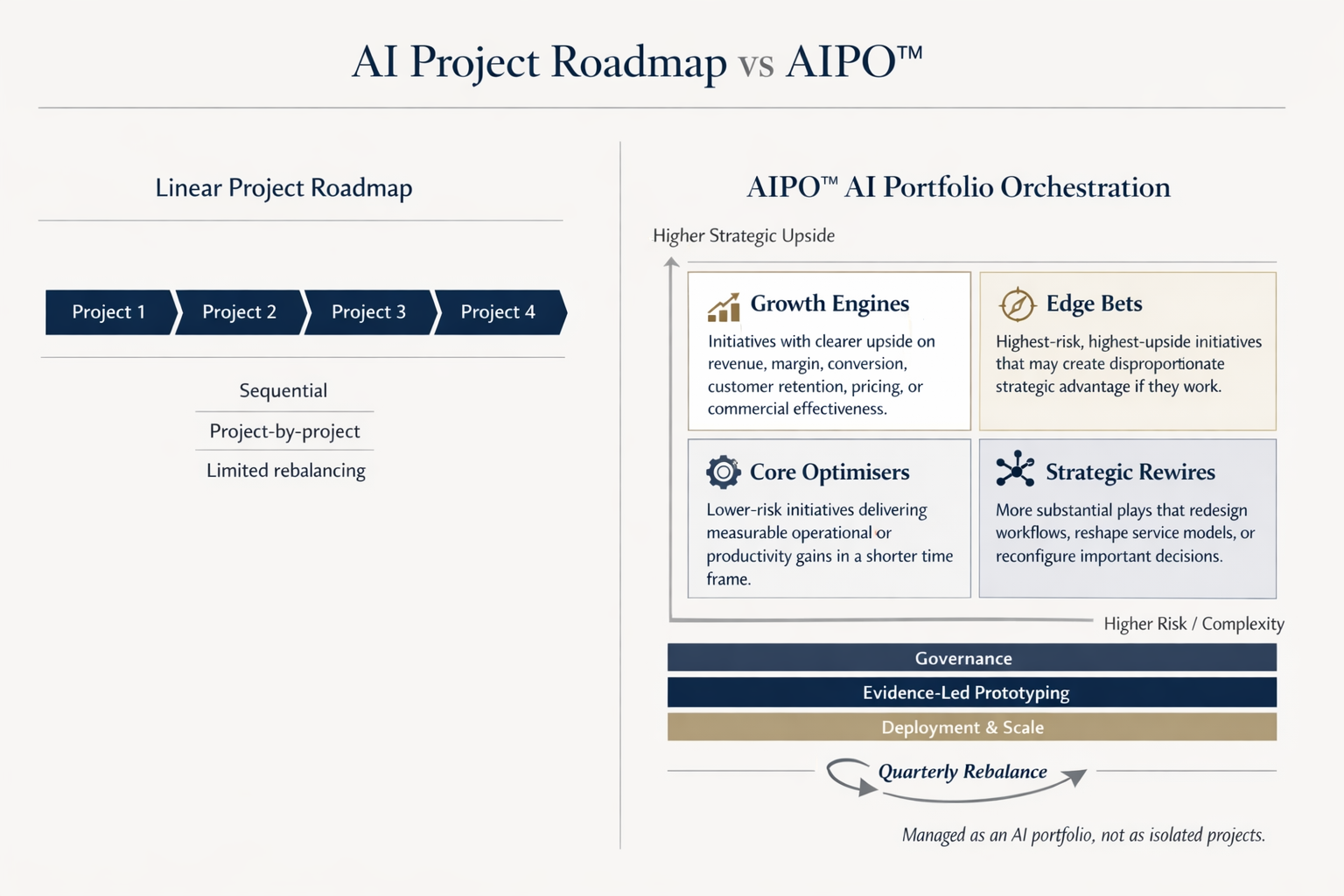

Most boards still govern AI as a list of isolated IT projects: prioritised by function, judged individually, managed like software releases. That model worked for ERP. It is poorly suited to AI.

AI initiatives behave as socio-technical ventures. They reshape decisions, workflows and expertise across the organisation whilst simultaneously carrying model risk, adoption uncertainty and regulatory exposure. Several forms of complexity arrive at once, not sequentially. That demands innovation management — a disciplined way to place bets, test assumptions under real conditions, absorb failure without losing direction, and redeploy resources before the investment case hardens around the wrong things.

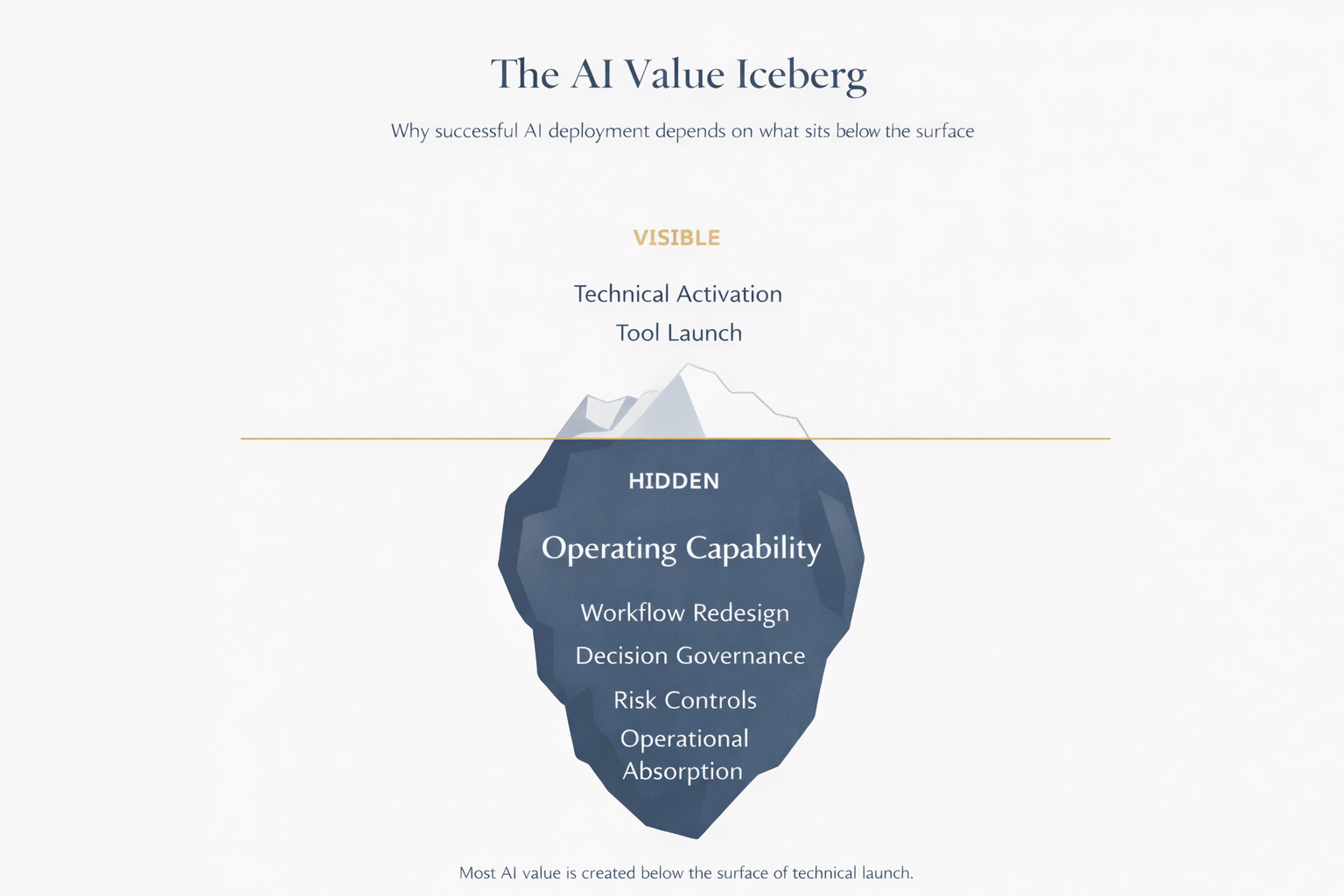

Fewer than 30 per cent of enterprise AI initiatives reach full deployment. Most that do struggle to demonstrate clear portfolio-level value. The architecture is the problem, not the ambition.

The private banker didn't build you a mutual fund

There is an analogy I return to in boardroom conversations when this comes up.

Mass-affluent clients are often presented with something off the shelf: a generic fund, a standard allocation, a product that is tidy, respectable and easy to distribute at scale. Sophisticated clients are handled differently. A private banker begins with mandate, risk tolerance, time horizon, liquidity needs and the realities of the client's situation. From there, a tailored portfolio is constructed, monitored and rebalanced over time.

Too many organisations are approaching AI in the mass-affluent way. They assemble a familiar set of use cases, adopt standard templates, and produce a roadmap that looks coherent without being built around the institution's strategic priorities, constraints, risk appetite and capacity for change.

AI strategy, handled seriously, is portfolio management.

How AIPO™ - AI Portfolio Orchestration works

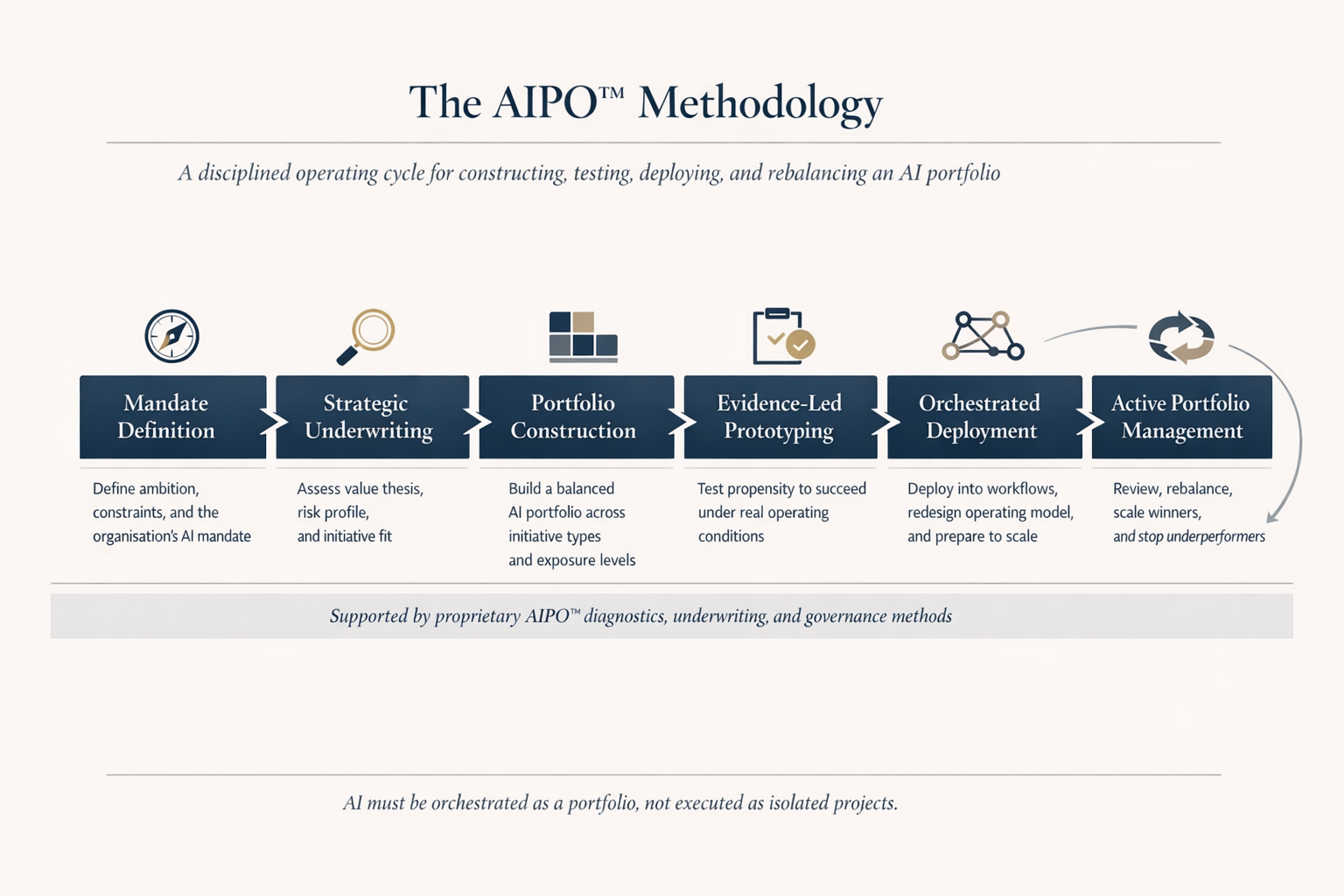

AIPO™ — my AI Portfolio Orchestration methodology — follows six phases, each designed to address a failure mode that standard project governance does not reach.

Phase 1, Mandate Definition, establishes what AI must actually deliver: the strategic outcomes, time horizon, risk tolerance and practical constraints — data readiness, governance maturity, leadership bandwidth — that determine what the portfolio can realistically absorb. Most organisations skip this and pay for it later.

Phase 2, Strategic Underwriting, treats each candidate initiative as a strategic investment. Value thesis, conditions for success, risk profile, regulatory exposure, and — critically — external pattern recognition. What are competitors doing? Where is overinvestment forming? Shortlists regularly change when this scan is done honestly.

Phase 3, Portfolio Construction, selects initiatives as a group rather than in isolation. Core Optimisers deliver near-term, visible wins. Growth Engines and Strategic Rewires pursue more ambitious outcomes. Edge Bets are deliberate, higher-risk plays — most organisations are better served by running only one at a time. One European financial institution arrived at a portfolio review with eleven AI initiatives across three business lines. Mapped as a portfolio rather than a roadmap, eight of the eleven shared the same underlying data infrastructure. A single governance failure would have simultaneously stalled the majority of active work. Two were consolidated, one was stopped. Identifying this at Phase 3 cost weeks. Discovering it during deployment would have cost considerably more.

Phase 4, Evidence-Led Prototyping, replaces the traditional proof of concept with the smallest credible operational test capable of exposing critical uncertainties early — in real workflows, with real users, real data and real exceptions. The result is a disciplined decision: proceed, redesign, hold or stop.

Phase 5, Orchestrated Deployment, moves validated initiatives from approval to operating capability. Launching a tool is the easy part. The harder work concerns workflow redesign, decision governance, control adjustments and the activities that should disappear because the AI-enabled process has made them redundant. Scale is earned, not assumed.

Phase 6, Active Portfolio Management, reviews the portfolio as a whole on a fixed cadence. Which initiatives are creating value proportionate to their cost? Which have stalled? What enters the next cycle, and what leaves to make room for it? This is how portfolios compound.

Why this matters now, not next year

The EU AI Act's core governance requirements apply from 2 August 2026. Regulatory positioning belongs at mandate and underwriting stage — not retrofitted during implementation. Boards that treat it as a compliance annex tend to encounter the cost of that decision at the worst possible moment.

The organisations already operating this way are pulling ahead. Walmart runs proprietary AI platforms across stores, apps and virtual environments. Airbus identified 600 generative AI use cases in under a year. BMW runs more than 200 AI solutions in production. These are not pilot programmes. They are portfolios under active governance.

Three questions worth asking before the next Board meeting

Does your AI programme have an explicit risk budget — an agreed level of loss acceptable in the highest-risk tier in exchange for potential upside?

Do you have a rebalancing mechanism — a structured process that decides what scales, what stops and what enters the next cycle, driven by the calendar rather than by failure?

Are you measuring portfolio-level return, or counting completed initiatives and calling that progress?

If the honest answer is no, what you have resembles a list presented as a strategy.

Read the full AIPO™ methodology

The six phases described here are the operating logic. Behind them sits a proprietary advisory toolkit — diagnostics, underwriting frameworks, portfolio design instruments, prototype gate logic and rebalance methods — applied in live client engagements. The full AIPO™ White Paper sets out the complete methodology, governance rules, measurement approach and the regulatory considerations relevant to European enterprises. You can read it at larios.ai/aipo.

Yannis Larios is an Independent Non-Executive Director and AI Strategy Advisor, and the creator of AIPO™ — AI Portfolio Orchestration Methodology. If this resonates, please consider subscribing to “The Next Agenda” newsletter in Linkedin. For briefings or board-level discussions, feel free to reach out to me; Independent Non-Executive Director dialogues welcomed where my expertise adds value.